Implementing DINO from scratch

Infos

- Paper: Emerging Properties in Self-Supervised Vision Transformers ICCV 2021

- My GitHub implementation: link

- Overall Score: 3/5

Paper

What is the paper about?

- DINO is a form of self supervised learning used for vision transformers (ViT).

- The goal is to train a model which, given an image, returns features that are relevant and descriptive of the input.

- These features can then be used as a starting point to perform different vision tasks (like object detection or semantic segmentation).

- The key here is that no labels are required (making it cheap and scalable) and that using these learned features is better than starting the learning from scratch.

What is the core idea?

- The core idea is to take two instances of the same model (student and teacher) and make sure that the output that they return is the same.

- The two models are fed different views of the same image, and they are trained to return the same output.

- To make sure that a trivial solution is not learned two things are used: - a centering and sharpening of the model's outputs - updating the teacher model using an exponential-moving-average

- Surprisingly, the features that these models learn are a good description of the overall image.

Implementation Journal

Getting things started

- In order to reproduce this paper I needed two main things: - a dataset that I could train on. - a working implementation of a ViT.

- For the dataset I went with my trusted Pascal-VOC. It's pretty small (~17k images and 636M in disk size) which makes its ideal for my low hardware budget.

- For the model, I first decided to just use the pytorch implementation of ViT. This makes sense, since it's efficient and I know it's correct. I implemented a wrapper class around the one from pytorch and I was good to go.

- But.. I then kept reading the paper and saw that the data augmentation that is used (multi-crop) requires different image sizes. This means that my positional embedding for the standard image size will not work for smaller crops. I then had two choices: - scale up the small crops to the same size as the other images (which is not what the paper does) - or change the implementation of the ViT forward pass to handle this.

- Reluctantly, I went for the second approach. But I decided that if I had to rewrite this part of the code, I could use my old implementation of ViT since I was already familiar with it.

- After implementing the multi-crop view in the dataloader and testing that things actually worked with my hacked ViT I started with the loss.

- The loss should make sure that the output for the teacher and student for different views are the same. The teacher consumes global views (big crops) of the image, whereas the student both global and local views (small crops). So 3 forward passes are needed to get all outputs.

- After finishing all of this, I launched my first training. Of course It did not work..

Debugging

Here is a list of things that I got wrong in the first implementation:

- Teacher and model initialized with different weights: - I guess this is not technically wrong but it definitely helped stabilize training at the beginning.

- Setting a small number of output classes: - Having taken my own implementation of ViT, I didn't even think of changing the number of classes (20) that I had in my code. - But after reading the paper more I found that the official implementation uses . Since my dataset and model were much much smaller than the official one, I used .

- Buggy outputs - This one was difficult to spot. I wrote two post processing functions for the student and teacher outputs. I applied sharpening for the student and softmax and for the teacher centering, sharpening and softmax. - In my loss function I somehow re-applied softmax to my student outputs which lead to negative and NaN losses.

- Wrong matching in the loss: - In the loss you need to match the outputs of the teacher with the outputs of the student (except for the same global view). - In my first implementation I handled batching in a wrong way leading to a mixing of teacher/student outputs.

- Overfitting a single image - I usually try to overfit immediately to a single image to see if there is anything wrong with my code. - Here, I lost a bit of time trying to define a good overfitting strategy. Since augmentations are used, if I removed everything, I would get 0 losses which is good but not really helpful. Adding cropping back did help debug but not quickly. It actually takes a good while before the loss starts going to 0. I could have saved myself some time by understanding that this was an expected behavior and not a bug.

Conclusion

- The paper is well written. You get the idea quite quickly and there are only a few cryptic chapters in it.

- Implementation wise I would say it's low-medium difficulty. If you are familiar with ViT then the positional embedding interpolation is fairly easy.

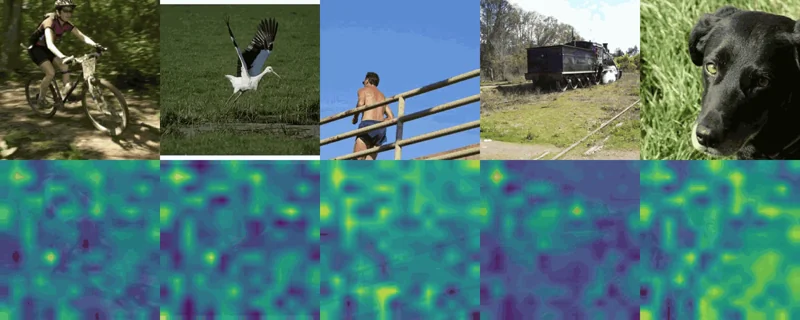

- Result wise, I of course just wanted to see if it worked and if I could reproduce qualitatively the results from the papers. I was quite surprised that after 1 night of training on my MacBook Pro M1 with 8GB the small ViT was already showing first sings of relevant attention masks.